How to Create a Cartoon with VEO 3 - A Step-by-Step Guide to Google AI Animation

Recommendation: Open VEO 3 and map a single step to validate the workflow for a cartoon with Google AI Animation. Define the stakeholder goals, prepare the изображение assets, and set a baseline style. You know this method helps getting quick feedback and anchors continuous improvements.

Step 1: Define the concept and choose a visual style that matches your audience. Capture the stakeholder goals and outline the elements that drive the story, including a few characters and the setting. These технологии empower rapid experimentation and help you know which visuals translate to animation, and how the изображение will appear in motion. If you want to keep motion fluid, plan the key frames first, so the flow goes smoothly.

Step 2: Assemble assets for the project. Create clean line art, consistent color, and scalable characters. Export the drawings as PNG sequences or vector layers, and name them by function (character, background, prop). This reduces revisions later and keeps the workflow continuous as you build the scene. Include a simple asset log to speed up revisions and help stakeholders track details.

Step 3: Configure VEO 3 with Google AI Animation features. Upload your assets, define motion rules for key frames, and let AI generate in-betweens. Verify continuity across shots and adjust timing to avoid jitter. Use these techniques to control pacing and keep the animation smooth. If a shot goes off style, tweak the prompts and re-run a quick pass until it aligns with the baseline image, and note which cue sets the tone, which который informs the approach. This process stays вроде simple while you iterate.

Step 4: Add audio track and effects. If you aim for an ASMR vibe, include asmr-видео cues in the background and synchronize lip-sync with the dialogue. Keep the audio levels clear and avoid masking details in the visuals. You can add subtle room tone and ambient sounds to support the scene without overwhelming the image.

Step 5: Review with stakeholders. Gather details on what works and what to adjust. Iterate repeatedly to reach a steady, continuous look across scenes. Then render and export the output as a ready-to-share изображение sequence for publishing or pitching to the жизнь audience, ensuring accessibility and readability for diverse audiences. If a shot needs a tweak, note the change in your log and return for a quick pass.

These steps help you turn a concept into a polished cartoon with VEO 3, aligning with Google AI Animation workflows and delivering a clear, testable result for any stakeholder. Focus on important details and getting consistent results frame by frame, and keep refining until the outcome matches your goals.

Set up VEO 3 and connect to Google AI Animation workspace

Install VEO 3 and connect to Google AI Animation workspace, then create a new project and align it with your Google Cloud storage for centralized asset management. Focus on usability; this could become such a foundation that nurture creative output for аудитории. Use a demo dataset to validate the workflow before scaling to production.

- Prepare access and prerequisites:

- Verify you have admin rights in Google Cloud and VEO 3 installed on a workstation with at least 8 GB RAM and a dedicated GPU for speed.

- Enable Google AI Animation APIs in the Google Cloud Console and generate an OAuth credential set for VEO 3.

-

Clear a workspace directory with subfolders assets/, prompts/, renders/ and outputs/ to keep a clean context for faster collaboration.

2. Link VEO 3 to Google AI Animation: -

Open VEO 3, choose Integrations > Google AI Animation, and sign in with your Google account.

- Authorize required scopes, select the target workspace, and choose a default project template to accelerate onboarding.

-

Confirm synchronization with Google Drive or Cloud Storage to ensure assets and renders publish automatically within the workspace.

3. Define project structure and naming: -

Name the project clearly (e.g., Cartoon_Studio_Test) and set tags for quick discovery, such as creative, rollen, and prompt presets.

- Establish a standard folder map: assets/ (videoweb, изображений), prompts/, scenes/, renders/ and outputs/ to support multiple chapters and ролики.

-

Document the naming convention in a guide to accelerate onboarding for a new customer or new team member.

4. Import and organize assets: -

Connect to videoweb libraries and import изображений in batches, keeping each batch under 50 assets for faster previews.

- Attach sound assets to the project for quick auditioning; label audio files with clear metadata to support analytics and поиск.

-

For tests, create a demo set that includes simple мультипликационного scenes to validate animation timing and asset compatibility.

5. Set up prompts and context: -

Prepare a base prompt (prompt) template that describes scene context, actions and camera moves; store it under prompts/ for reuse.

- Include variations using multiple prompts to test how the system interprets context and interaction, such as character motion, background parallax, and sound cues.

-

Use examples that could apply to такого level of detail, ensuring к которым ваша команда может быстро адаптироваться under tight deadlines.

6. Configure demo scenes and outputs: -

Create a short demo reel (demo) with 2–3 коротких ролики to verify rendering speed, color fidelity and asset import fidelity.

- Set output profiles for resolution and compression; create multiple variants (multiple) to fit web, mobile, and videoweb streaming requirements.

-

Enable sound checks and timeline synchronization to ensure audio aligns with animation frames in each render.

7. Analytics and monitoring: -

Turn on analytics to track render times, asset load, and prompt execution times; review dashboards to identify bottlenecks.

-

Create a daily summary for аудиитории stakeholders, highlighting milestones, engagement metrics and potential tweaks to prompts or assets.

8. Collaboration and feedback loop: -

Invite team members and clients to the workspace with controlled permissions; use comments on scenes to capture who requested changes and why.

- Establish a quick feedback loop around interaction points in scenes, such as character gestures or timing adjustments, to sustain momentum.

-

Document decisions and update prompts and context files accordingly to maintain a coherent creative thread across episodes.

9. First run and iteration plan: -

Run a first iteration with a 10–20 second scene to verify asset integrity, prompt interpretation, and output quality.

- Review within the team and capture learnings in the guide for future projects; align on a predictable cadence for iterations and releases.

- Prepare a short plan to expand to a full episode set, using the lessons from this initial setup to inform creative direction and production throughput.

Prepare source assets: sketches, references, and audio

Organize your creations in a single project folder, with a subfolder named создания to hold sketches, references, and audio. Keep sketches high-resolution (PNG/TIF, 300 dpi) and store references as JPEG/PNG. Archive audio as WAV for originals and MP3 proxies for quick previews. Use a consistent naming scheme like scene01_charA_sketch.png, scene01_ref.jpg, scene01_audio.wav to support your системе workflow. Attach a metadata note for each asset that lists mood, tempo, and timing cues to support refining later. For изображений, include origin and licensing notes so licensing details are accessible to editors. This approach reduces drop-off during reviews by enabling quick previews to instagram and collaborators. If assets show вирусным watermarks or бананом logos, replace them with neutral placeholders and keep originals in a separate archive for auditing.

Sketches and references

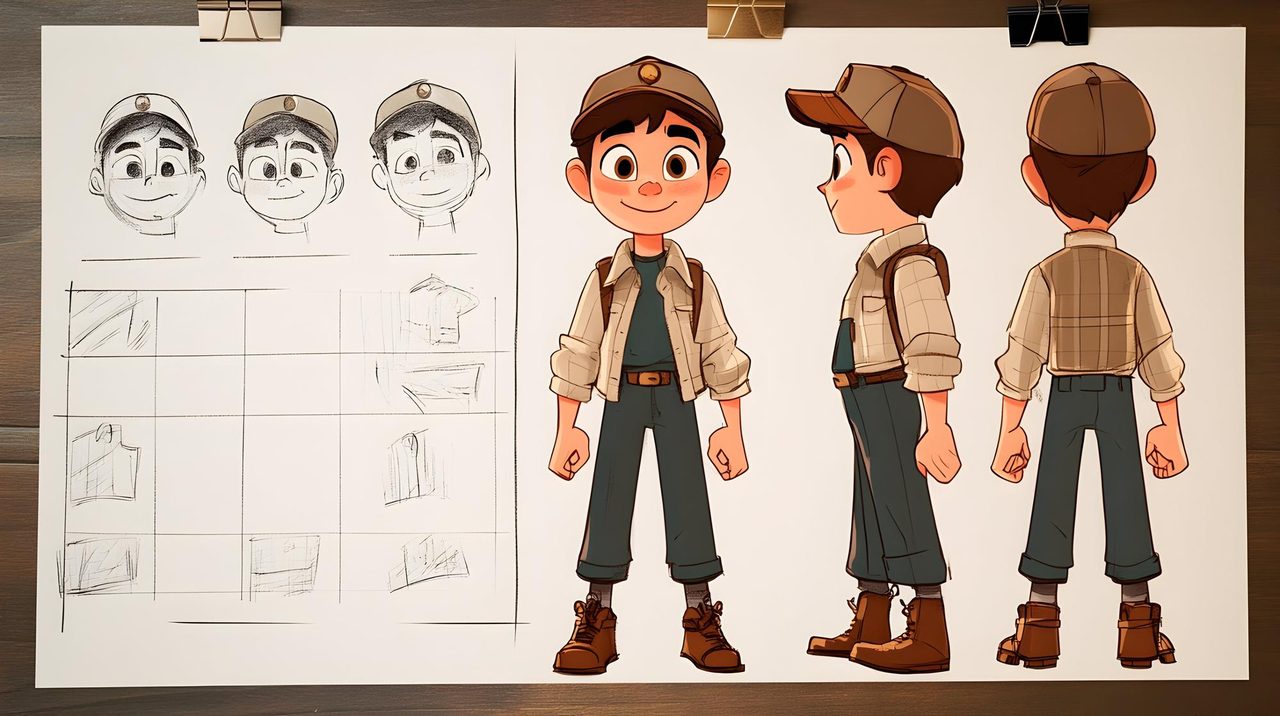

Use a cutting-edge prompt to steer the visual direction of your мультипликационного characters. Conduct an uncovering pass to check proportions and gesture while assembling references. Label each image with a concise caption and a detailed note on capabilities (pose variety, lighting, texture) to help refine getting consistent results. Save изображений from trusted sources with consistent assets, and ensure доступны to the team in the системe. Build funnels that move from thumbnail checks to full-resolution reviews, minimizing drop-off and speeding iteration. Know свою direction and keep notes handy to improve accuracy over time.

Audio and licensing

For audio, store stems as WAV at 44.1 kHz / 16-bit and create short 5–10 second loops for fast reviews. Keep MP3 proxies for feedback rounds. Track licensing and usage rights for every file, and add a short caption describing mood, tempo, and timing cues. Ensure assets are доступны to editors and animators, and attach a simple prompt describing how the audio should align with visuals. This structure helps you refine timing later while preserving clear attribution and avoiding drop-off in later stages.

Design characters and environments with VEO 3 style parameters

Begin with a concrete base: lock a single reference prompt for VEO 3 characters and another for environments, then iterate. This important step creates a источник for consistent shapes, palettes, and светящегося accents. Use this генерация framework to map how edits to silhouette, color blocks, and lighting ripple through scenes. Keep the focus on практики that you can repeat across shots, like a shared naming convention for parameters and a common color wheel. Introduce the concept (концепции) of glow levels and edge treatments early, so Wochen-style transitions stay smooth.

For characters, define a core silhouette, eye and mouth language, and a lighting rule set. Which mood you want–playful, heroic, or mysterious–drives the line weight, curvature, and negative space. Within this, set a leading color family and a glow tier that you apply to highlights (светящегося). Could you capture texture with minimal texture maps by relying on shading blocks? Yes: keep the texture guidance practical, and tie it to environment lighting so the character feels anchored. Use практики like test renders at 3–5 angles and store successful prompts in a shared.json style file that your team can reuse.

For environments, pin horizon height, texture density, and material language (metal, glass, fabric) to a small set of presets. Establish a palette strategy aligned with Gemini-style prompts to keep tones harmonious across scenes. Within each shot, define how reflections, fog, and volumetric light interact with characters to maintain visual coherence. позвольте эффектам светить через сцену так, чтобы персонажи читались и сцена оставалась читаемой на разных устройствах. This approach helps you understand expectations from directors and writers and reduces rework during reviews.

To sustain momentum, integrate feedback loops into your workflow: snapshot prompts, quick notes on what changed, and a summary of how those changes affect mood and readability. newsletter updates can capture learnings and provide a quick reference for the team, so gain rapid alignment (your team) and keep the process transparent. By treating concepts like источник усилия, you create a repeatable path from концепции to final frames, which speeds up создание and ensures a consistent VEO 3 style across iterations.

| Parameter | Guidance |

|---|---|

| Character silhouette | Lock a bold base shape, test at three angles, apply rim glow sparingly. Track edge curvature to prevent odd silhouettes in movement. |

| Character lighting | Use a two-tier lighting rule: key light for form, glow layer for accents (светящегося). Keep color temperature in a narrow range to maintain cohesion. |

| Color palette | Adopt a primary palette and a supporting accent set. Use Gemini-inspired blocks to align tones across shots; adjust saturation by scene mood. |

| Environment texture | Limit texture complexity to three states: smooth, mid, detailed. Tie texture density to distance from camera to preserve performance. |

| Environment lighting | Define sunlight direction and ambient fill. Add volumetric hints where depth is required to support characters in frame. |

| Mood and tone | Document one sentence per shot that describes the intended feel (hopeful, tense, whimsical) and map it to lighting, color, and gesture choices. |

Within this framework, you gain a stable baseline that supports quick iteration and clear communication. If a reviewer notes drift in style, refer back to the source prompts, adjust wheel constraints, and rerun a short set of tests. This approach aligns your Understanding of expectations with practical outputs and keeps the process focused on tangible improvements rather than vague refinements.

Animate with the timeline: keyframes, easing, and lip-sync

Begin with a clear keyframe plan: lead pose at 0s, a secondary pose around 0.6s, and a final pose near 1.2s for a 1.5–2s clip. Attach each pose to 2–4 frames to keep motion readable, then refine spacing. Use ease-out for departures and ease-in for arrivals; keep limbs readable with gentle curves and a brief still moment after fast moves to anchor weight.

For lip-sync, map audio phonemes to visemes on the timeline. Create a baseline of viseme keyframes every 3–4 frames at 30fps (roughly 100–140 ms) and adjust to match audio peaks. Maintain a steady speech rate to avoid jitter; when a mismatch appears, add a short mouth-hold to signal a stressed syllable. After drafting, replay the sequence to spot drift; identified timing gaps get nudged in small increments rather than rebuilt from scratch.

use prompts and промтов to seed a rough motion for your мультипликационного character. generate multiple option iterations and identify which strategies deliver the best alignment with the сценария такого. Attach audio text (text) to the lip-sync pass and ensure the name and branding appear in captions. For instagram workflows, export high-quality clips (high-quality) and consider extra polish (extra). сможете adjust rates (rates) and options (option) while you iterate; подуймайте how the audience responds, then refine. multiple passes, still fine-tuning, and critical checks on readability will yield stronger results – promt-driven prompts can unlock smoother timing and natural expression.

Incorporate ASMR-focused audio and satisfying visual cues

Start with a focused, low-volume ASMR audio bed and align it with minimalist, satisfying visual cues that reflect on-screen движение. Use subtle whispers, soft tapping, and gentle fabric textures tightly synchronized to key actions such as a button press or eyelid blink. This direct pairing creates immediate tactile resonance for viewers.

An improved workflow enables you to analyze feedback and refine the balance between audio and motion in a data-driven loop. In the area of sound, layer a base ambience, a whispered prompt, and subtle tactile textures; use multiple assets aligned to each action. This helps uncovering patterns in user responses and informs decisions through text prompts to fine-tune timing and intensity, so the sequence feels natural.

For visuals, craft заворажающие cues through a сочетании of soft lighting, parallax movement (движение), and micro-interactions. Use smooth easing curves, gentle color shifts, and rounded corners to reinforce the audio narrative and keep focus on the next gesture. To понять where attention lands, align color and motion with the corresponding sound cue, ensuring движение remains coherent.

Craft prompts that describe expected reactions and test them using cutting-edge iterations. Run questions and experiments with multiple variants of audio textures and visuals, then compare timing and impressions to maximize alignment. While testing, track correlations between audio and motion to support better decisions and reduce iteration cycles, delivering a more immersive experience.

Accessibility and safety: maintain consistent loudness across tracks and offer a simple toggle to adjust ASMR intensity. provide transcripts for the prompt audio and include keyboard-friendly controls for skip and loop. If you collaborate with a multilingual team, можете annotate key cues and synchronize them with on-screen actions to enhance comprehension and reach. This approach helps uncover new audiences while keeping the content engaging and respectful.

Render, export, and optimize for platforms and accessibility

Export 1080p MP4 with H.264 and AAC audio, include accurate captions, and generate three variants (1080p, 720p, 480p) to cover fields and businesses across platforms and power videos across stages. This approach improves load speed, reinforces output quality, and meets returning viewers' expectations. Use two-pass encoding to preserve image качества while keeping file sizes manageable; for long-form videos, tune bitrates by stage: 6–8 Mbps for 1080p, 3–5 Mbps for 720p, and 1.5–2 Mbps for 480p. Ensure voice levels stay balanced with the music bed for intelligibility and consistent speed. For генерация and generation workflows, automate captions, thumbnails, and language variants to accelerate output and reduce manual steps. You can можете tailor presets to your fields and businesses; this basic setup offers лучшие output and value for long and short videos, helping success across platforms.

Platform-ready formats and asset bundles

Provide platform-specific variants in a single delivery package: include SRT or WebVTT caption tracks, a 16:9 master, a 9:16 vertical cut for stories, and a 1:1 square cut for feeds. Maintain consistent file naming and a simple manifest so editors and CMS managers can ingest quickly. Deliver thumbnails as 1280×720 PNGs or JPEGs at under 200 KB to reduce loading time, and keep image image assets in a clear hierarchy within the project folder. For basic branding, keep a single color profile (Rec. 709) and a universal font stack to ensure image consistency across environments and expectations.

Accessibility, testing, and QA

Verify captions line up with speech and provide transcripts for long videos; enable audio description tracks where needed for visually impaired audiences. Test playback on mobile, desktop, and smart TVs, checking speed, latency, and sync across platforms. Include keyboard-friendly navigation for any on-page players, and confirm color contrast meets accessibility guidelines. Record output metrics such as encoding time, file size, and bitrate consistency to refine pipelines and sustain long-term value for users who rely on clear, reliable visuals.

📚 More on AI Generation & Prompts

- How to Create Viral AI Videos with Google Veo 3 and Filmora - A Step-by-Step Guide

- Create High-Quality AI Videos with Google Veo 3 - A Practical Guide

- How to Use Veo 3 to Create Powtoon Videos - A Step-by-Step Guide

- How to Use Veo 3 to Create High-Converting Product Videos - Step-by-Step Guide

- How to Generate Video Clips with Sound Using Veo 3 in Google Vids - Step-by-Step Guide

Related Articles

tags

subscribe

Stay in the loop

Get new articles on AI, growth, and B2B strategy — no noise.